Summary

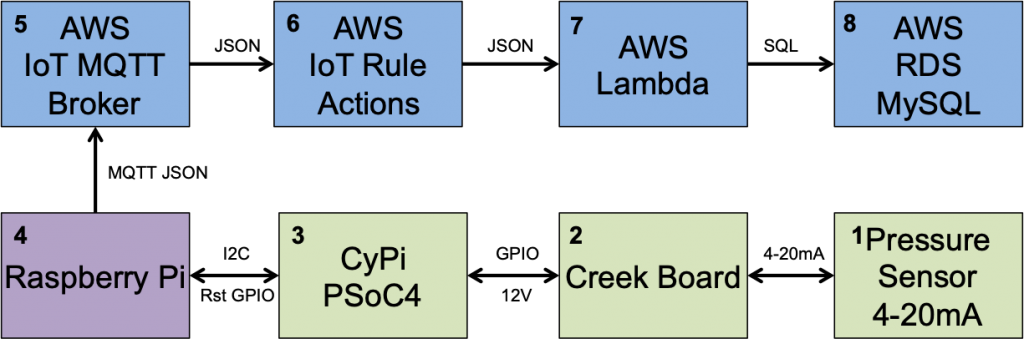

In this article, I will show you how to use the AWS IoT rules engine to make the last connection required in the chain of data from the Creek Sensor all the way to the AWS RDS Server. I will also show you the AWS CloudWatch console. At this point I have implemented

- 4 – The Raspberry Pi connection

- 5 – The AWS IoT MQTT Broker

- 7 – The AWS Lambda Function

- 8 – The AWS RDS MySQL Server

Let’s implement the final missing box (6) – The AWS IoT Rules

The AWS Rules

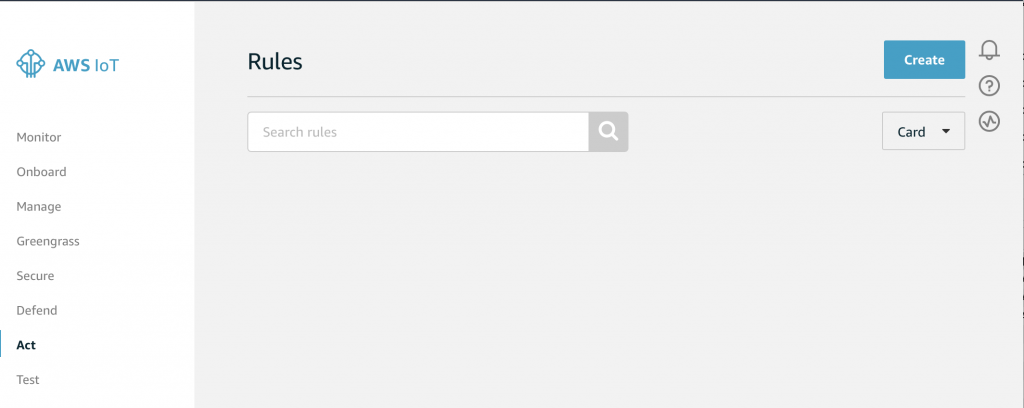

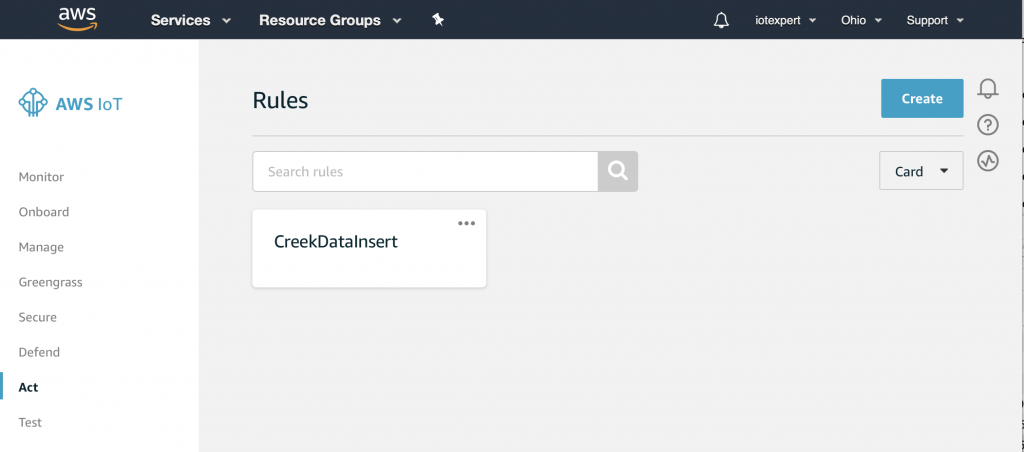

Start by going to the AWS IoT Console. On the bottom left you can see a button named “Act”. If you click Act…

You will land on a screen that looks like this. Notice, that I have no rules (something that my wife complains about all of the time). Click on “Create” to start the process of making a rule.

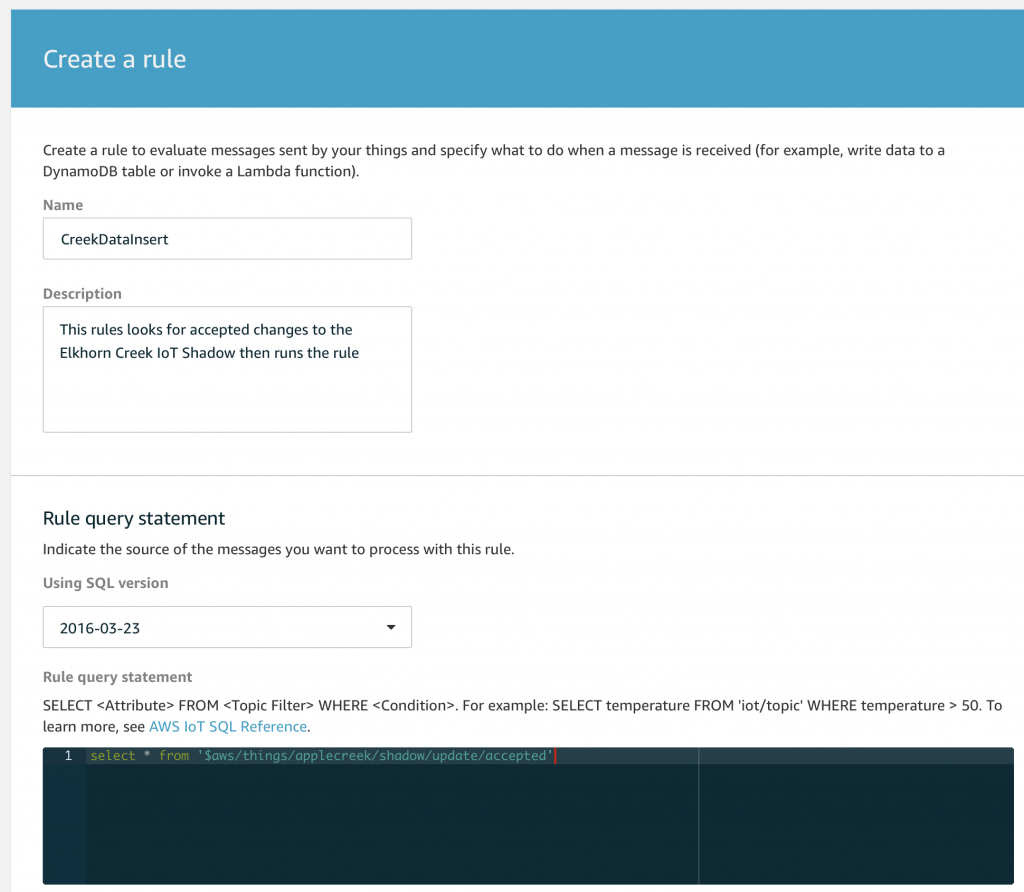

On the create rule screen I will give it a name and a description. Then, I need to create a “Rule query statement“. A rule query statement is an SQL like command that is used to match topics and conditions of the data on the topic. Below, you can see that I tell it to select “*” which is all of the attributes. And then I give it the name of the topic. Notice that you are allowed to use the normal MQTT topic wildcards # and + to expand the list to match multiple topics.

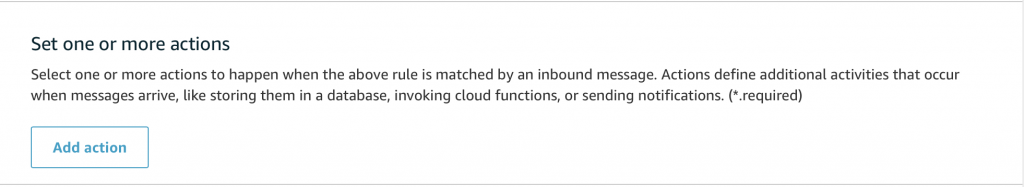

Scroll down to the “Set one or more actions” and click on “add action”

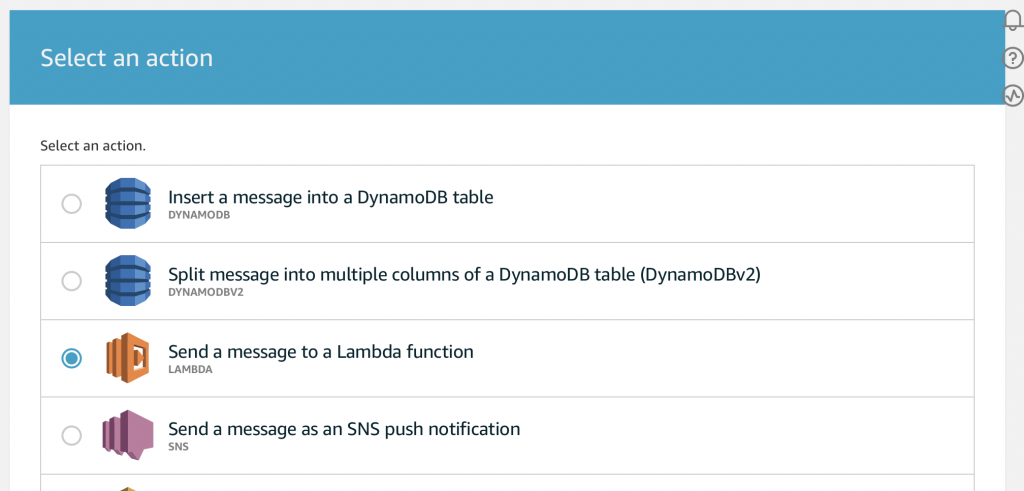

This screen is amazing as there are many many many things that you can do. (I should try some of the others possibilities). But, for this article just pick “Send a message to a Lambda function”

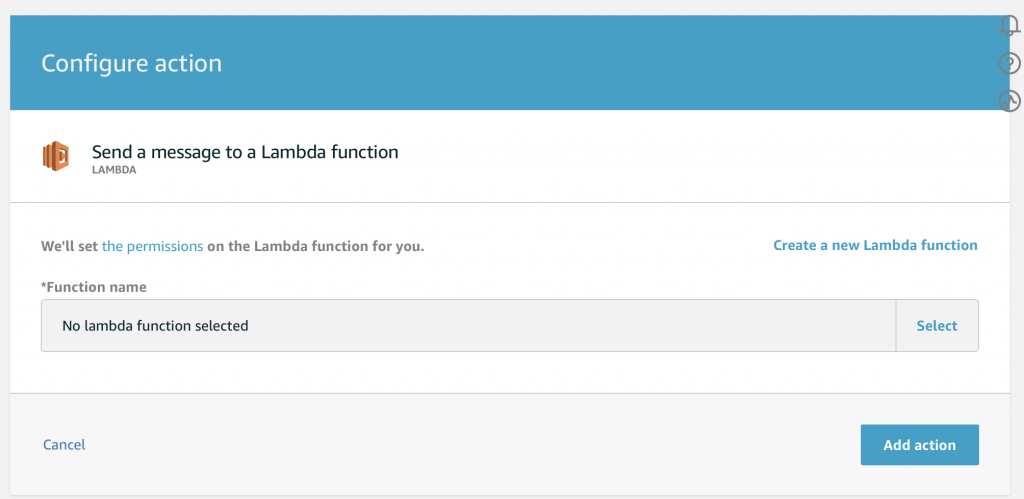

Then press “Select” to pick out the function.

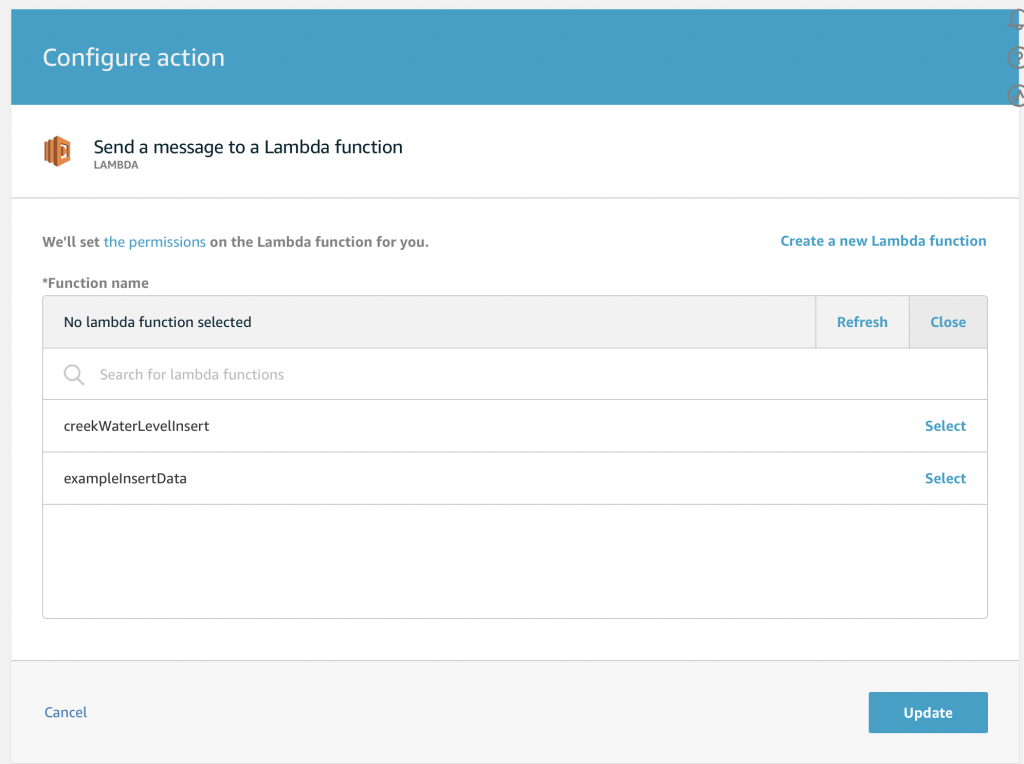

Then you will see all of your Lambda functions. Ill pick the “creekWaterLevelInsert” which is the function I created which takes the json data and inserts it into my AWS RDS MySQL database.

Once you press “Update”, you will see that you have the newly created rule…

The Test Console

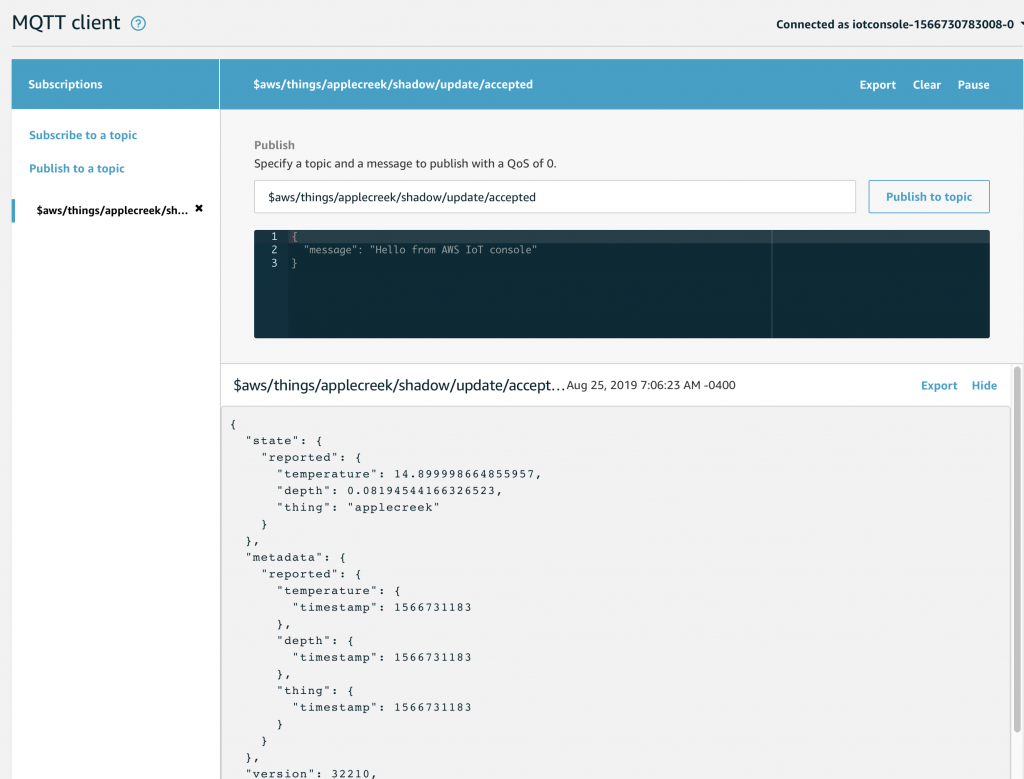

Now that the rule is setup. Let’s go to the AWS MQTT Test Client and wait for an update to the “applecreek” thing Shadow. You might recall that when a shadow update message is published to $aws/things/applecreek/shadow/update if that message is accepted then a response will be published by AWS to $aws/things/applecreek/shawdow/update/accepted.

On the test console, I subscribe to that topic. After a bit of time I see this message get published that at 7:06AM the Apple Creek is 0.08.. feet and the temperature in my barn is 14.889 degrees.

But, did it work?

AWS Cloud Watch

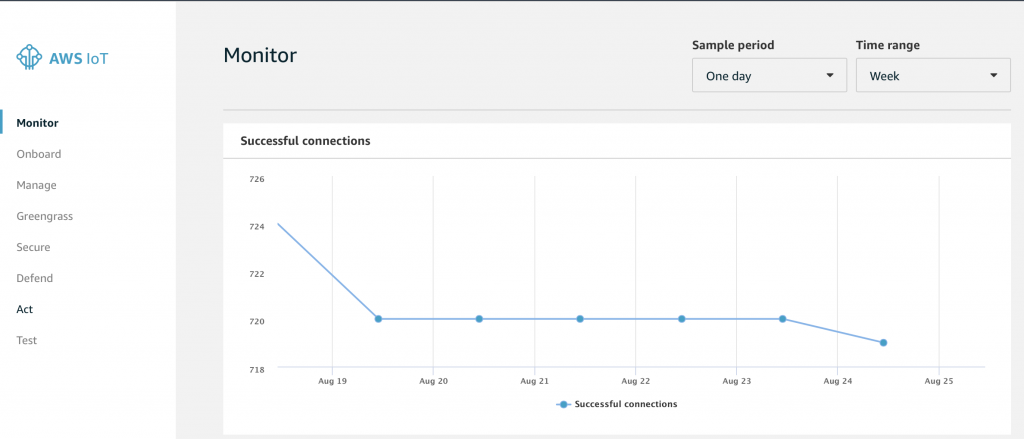

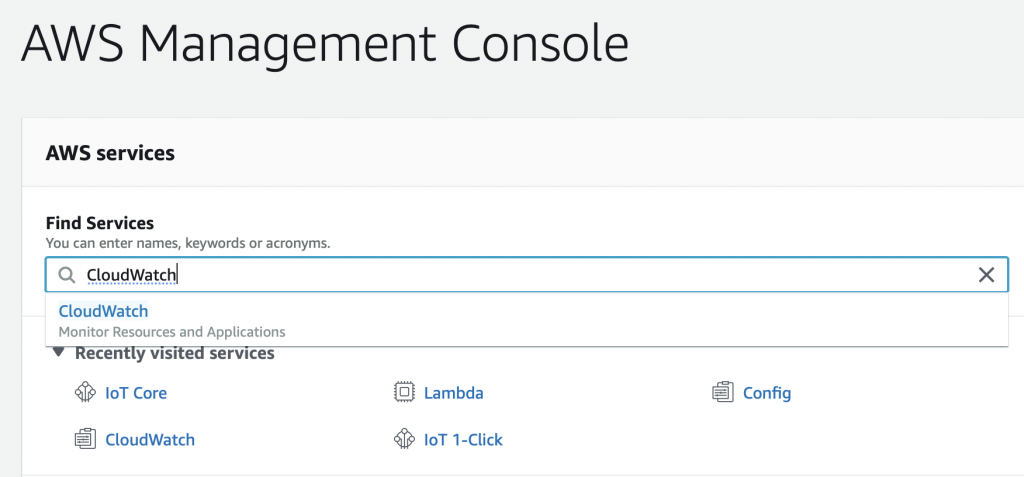

There are a couple of ways to figure this out. But, I start by going to AWS CloudWatch which is the AWS consolidator for all of the error logs etc. To get there search for “CloudWatch” on the AWS Management Console.

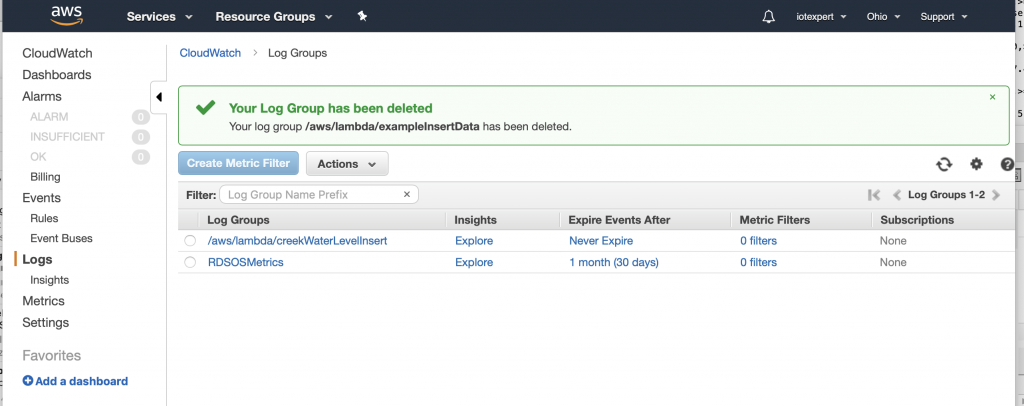

Then click on “logs”. Notice that the log at the top is called “…/creekWaterLevelInsert”. As best I can tell, many things in AWS generate debugging or security messages which go to these log files.

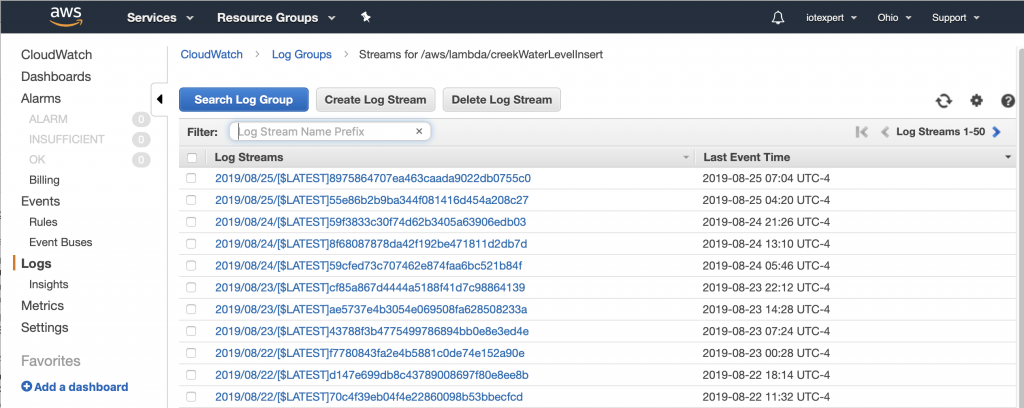

If you click on the /aws/lambda/creekWaterLevelInsert you can see that there are a bunch of different log streams for this Lambda Function. These streams are just ranges of time where events have happened (I have actually been running this rule for a while)

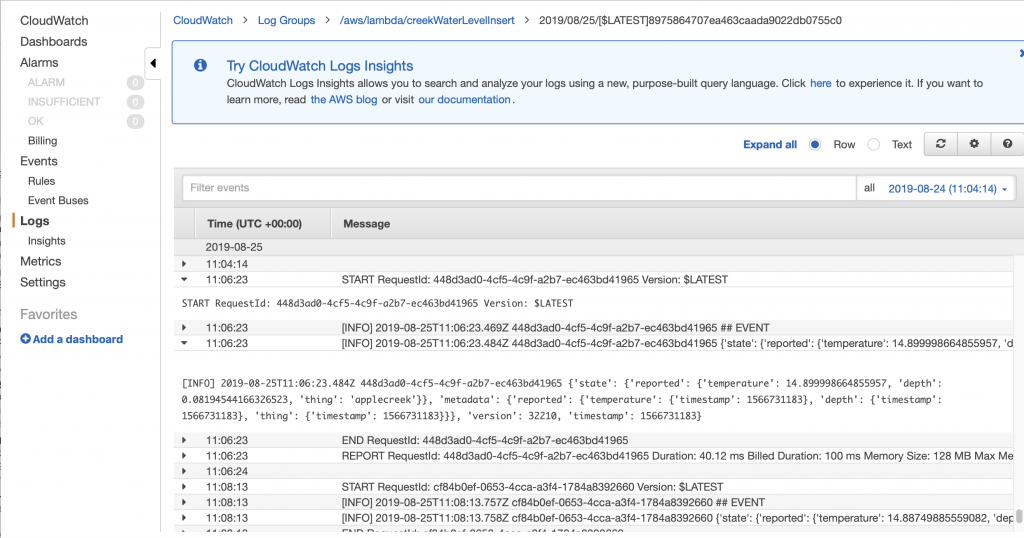

If I click on the top one, and scroll to the bottom you can see that at “11:06:23” the function was run. And you can see the JSON message which was sent to the function. You might ask yourself 11:06 … up above it was 7:06… why the 4 hours difference. The answer to that question is that the AWS logs are all recorded in UTC… but I save my messages in Eastern time which is current UTC-4. (In hindsight I think that you should record all time in UTC)

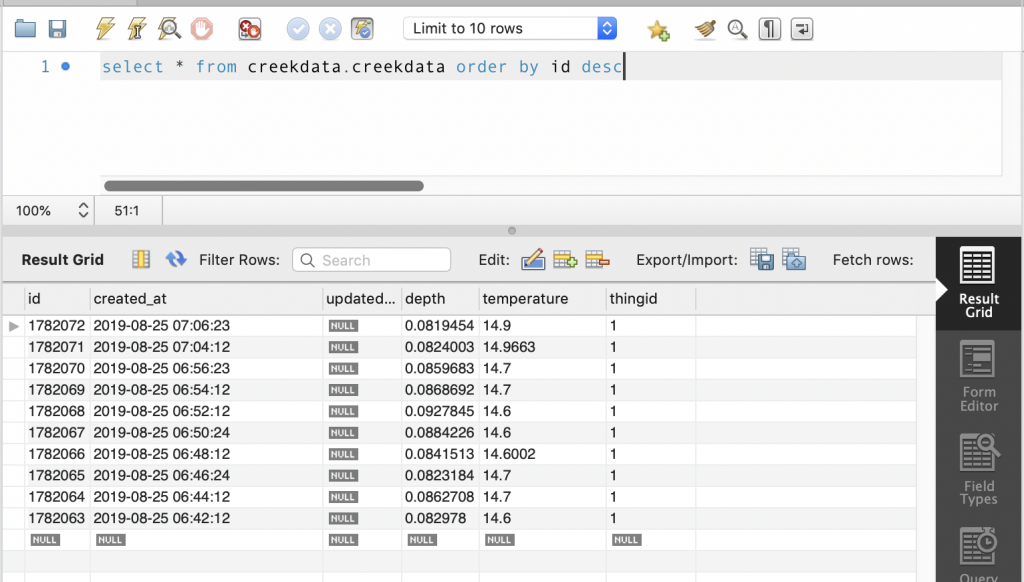

The real way to check to make sure that the lambda function worked correctly is to verify that the data was inserted into my RDS MySQL database. To find this out I open up a connection using MySQL WorkBench (which I wrote about here). I ask it to give me the most recent data inserted into the database and sure enough I can see that at 7:06 the temperature was 14.9 and the depth was 0.08… sweet.

For now this series is over. However, what I really need to do next is write a web server that runs on AWS to display the data… but that will be for another day.

2 Comments

Maybe you can use QuickSight to display your data

I think that is a good suggestion. When I did the original work I wrote everything… and Id like to use some analytics package rather than write everything.

Alan